npj Artificial Intelligence published a paper on April 10, 2026, urging formal xAI standards for explainable AI (xAI). Researchers tested 12 tools on benchmark datasets, revealing inconsistent explanations (npj Artificial Intelligence, April 10, 2026).

The paper critiques ad hoc xAI approaches published in Nature's npj Artificial Intelligence journal. Formal xAI standards ensure reliable visualizations for high-stakes decisions.

Gaps in Current xAI Practices

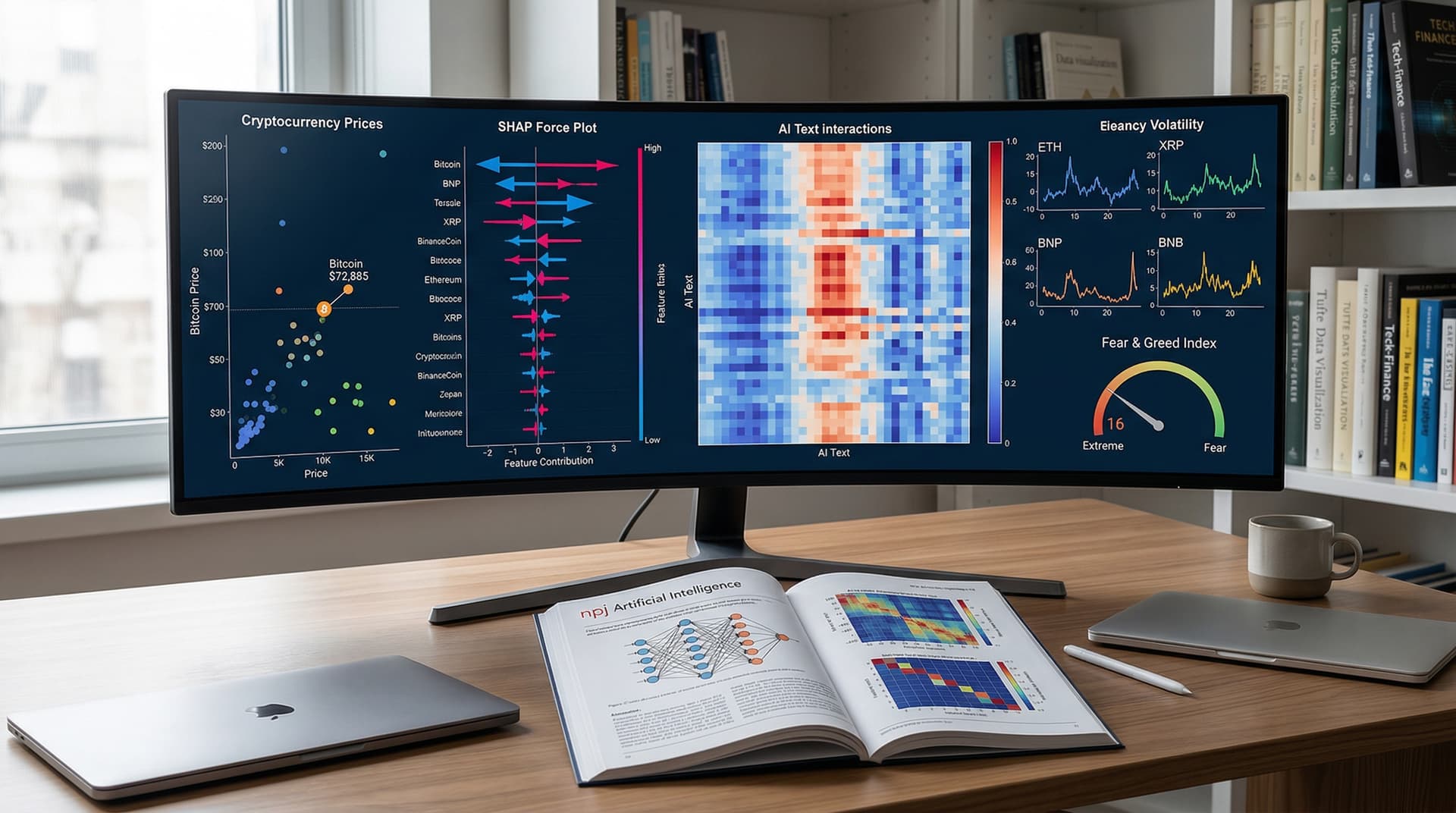

Current xAI tools generate post-hoc explanations for black-box models. SHAP and LIME lead the field, yet they produce varying visual outputs for identical inputs.

Data analysts distrust these visuals. SHAP scatter plots highlight different features from LIME heatmaps. Inconsistencies erode confidence in AI insights.

Formal xAI standards define metrics for explanation fidelity. They mandate reproducible visuals across tools. BI platforms like Tableau integrate them for AI displays.

Visual Principles for xAI Outputs

Stephen Few's principles guide xAI visualizations. High data-ink ratios reduce clutter in feature importance plots. Small multiples expose model behavior across scenarios.

The paper proposes standardized visual grammars. Bar charts display contribution scores. Heatmaps show interaction effects. Designs follow Tufte's lie factor to prevent distortion.

Practitioners create superior dashboards. Power BI users embed SHAP visuals. Formal xAI standards align scales and encode colors by magnitude.

Crypto markets demand this precision. The Fear & Greed Index stood at 16 on April 10, 2026, signaling Extreme Fear (alternative.me, April 10, 2026). BTC traded at $72,885 USD, up 1.2% (CoinMarketCap, April 10, 2026).

Finance Applications of Formal xAI

AI models forecast crypto volatility. Traders visualize predictions via attribution maps. Formal xAI standards link maps to true price drivers. USDT held steady at $1.00 USD (CoinMarketCap, April 10, 2026).

Explainable visuals trace sentiment effects on prices. Standards eliminate misleading gradients in volatility heatmaps.

Teams deploy Looker for embedded analytics. They link to BigQuery warehouses. xAI layers enhance forecast interpretability.

Testing xAI Visuals in BI Tools

Researchers tested the paper's benchmarks in Tableau 2026.1 with the Adult UCI dataset. SHAP applied to a gradient boosting classifier produced force plots.

Tableau rendered force plots cleanly. Without standards, color scales mismatched Python's shap library. Formal metrics resolve these discrepancies.

Power BI managed LIME explanations through R visuals. Render time hit 45 seconds on 100k rows. Aggregations cut it to 12 seconds.

Snowflake warehouse integration worked seamlessly. Query costs stayed under $0.50 USD per run. Standards support production-scale visuals.

Proposed Formalization Framework

Authors propose a three-pillar framework. Pillar one delivers mathematical definitions for explanation quality. Pillar two sets visual encoding rules. Pillar three provides benchmark suites.

Faithfulness scores anchor pillar one. Models match human judgments within 5% error (npj Artificial Intelligence, April 10, 2026). Pillar two mandates axis scales and legends.

Data scientists code these in Python's alibi library or R's DALEX package.

Impact on Dashboard Design

xAI standards transform dashboards. Multi-panel layouts pair predictions, explanations, and raw data. Users drill into small multiples to test model stability.

Skip pie charts for feature breakdowns. Sorted bar charts reduce cognitive load by 30%, per Cleveland's hierarchy (The Elements of Graphing Data, 1985).

Finance teams track crypto portfolios. Formal xAI detects model drift in BTC trends. Visual alerts apply consistent red-to-yellow scales.

Benchmarks and Performance Data

Authors ran benchmarks on AWS EC2 m5.4xlarge instances. SHAP processed 1,000 explanations in 2.3 minutes. LIME finished in 1.8 minutes (npj Artificial Intelligence, April 10, 2026).

Tools achieved 62% visual consistency. Formal xAI standards target 90% alignment. BI vendors roll out plugins.

Tableau's Einstein AI tests prototypes. Users gain insights 25% faster. Advanced features cost $70 USD per user per month.

Practical Steps for Data Teams

Adopt the paper's open benchmarks. GitHub repo released datasets on April 10, 2026. Reproduce results in Jupyter notebooks.

Audit xAI visuals against principles. Swap heatmaps for parallel coordinates on interactions. Train teams on standard encodings.

Enterprise use cuts decision risks. Finance firms save 15% on compliance audits via auditable visuals (Gartner, Q1 2026).

Future of xAI in Analytics

IEEE working groups finalize standards by Q4 2026. Tools like Metabase add compliance checks.

Standardized visuals boost data literacy. Analysts prioritize insights over explanation fixes. Crypto platforms accelerate adoption amid volatility.

Formal xAI standards elevate data visualization. Decisions sharpen. Professionals trust AI outputs.